Consistent Web Search and Fetch Across Every Model

Introducing openrouter:web_search and openrouter:web_fetch, two new tools that any model can call during a request. When a model decides to use one, OpenRouter executes it server-side and returns the result to the model without requiring any client-side implementation.

- Web Search: a tool for agentic search 0 to N times per request, letting the model choose its own queries and timing.

- Web Fetch: a tool for retrieving full page content from any URL. Commonly used for pages found during search.

Try it now in the chatroom(opens in new tab) by clicking the tool icon ![]() and read the docs(opens in new tab) for the API details.

and read the docs(opens in new tab) for the API details.

Swap Models Without Swapping Tools

Each model provider has its own built-in web search tool with a different schema. Switch models or providers, and you're stuck rewriting how you define, configure, and parse search results. You're also not guaranteed to have the same behaviors available, which can be problematic if you need features like strictly enforced blocked domains.

These new server tools give you one consistent way of enabling search and fetch. Specify {"type": "openrouter:web_search"} once, and the tool definition, invocation, and result format stay identical across all tool-calling models. If you want identical search behavior as well, you can specify a provider like Exa or Parallel so the results coming back to the model are consistent regardless of whether the request routes to GPT-5.5, Claude, or Kimi.

Web Search

Web search supports four engines:

| Engine | How it works | Pricing |

|---|---|---|

| Auto (default) | Uses native if the provider supports it, otherwise Exa | Varies |

| Native | The provider's built-in search (OpenAI, Anthropic, Google, xAI, Perplexity) | Provider pricing |

| Exa | Passes the search to Exa and bills from your OpenRouter credits | $0.004 per result |

| Parallel | Passes the search to Parallel and bills from your OpenRouter credits | $0.005 per request. Includes up to 10 results, then $0.001 per additional result. |

Each engine has different strengths. Native search is tightly integrated with the provider's model. Exa and Parallel add configurable result context size (search_context_size), which native engines ignore. Most engines support domain filtering (allowed_domains, excluded_domains).

You can configure this in the chatroom UI or via the API:

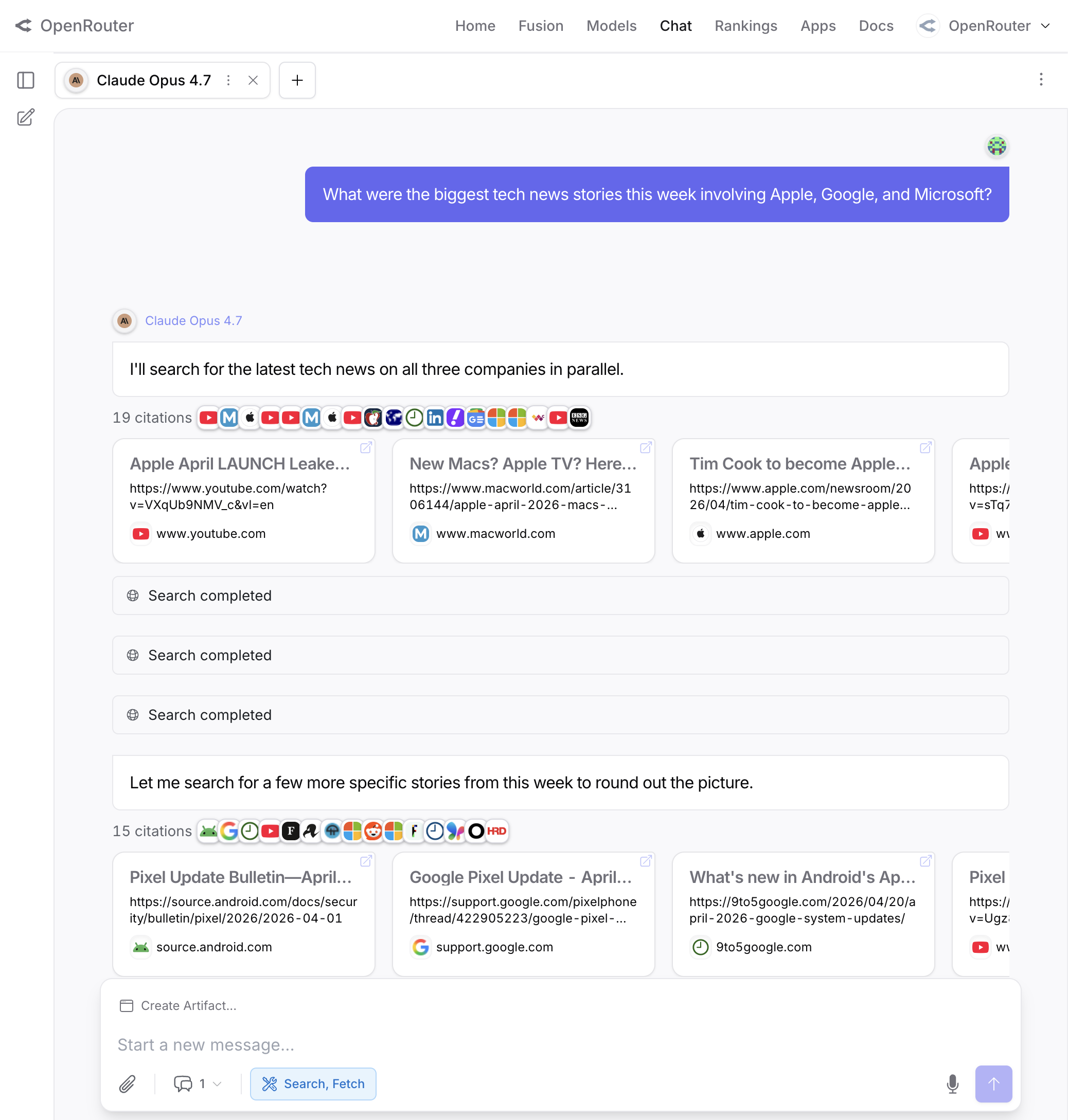

Parallel Searches in Agentic Loops

When a model needs to compare information across sources, it can fire multiple searches in a single request. A question like "compare the pricing of the top 3 cloud GPU providers" might trigger three separate searches, each with different queries, before the model synthesizes an answer.

Use max_total_results to cap cumulative results across all searches in a request. This keeps costs and context usage predictable:

Once the cap is hit, the model gets a message saying the limit was reached instead of running another search.

Web Fetch

Web fetch lets models retrieve full page content from URLs and comes with four supported engines.

| Engine | How it works | Pricing |

|---|---|---|

| Auto (default) | Uses native if supported, otherwise Exa | Varies |

| Native | The provider's built-in fetch | Provider pricing |

| OpenRouter | Direct HTTP fetch by OpenRouter | Free |

| Exa | Content extraction and clean markdown output | $0.001 per fetch |

Specifying Exa or OpenRouter as the engine ensures consistent fetch behavior across all models, including the ability to restrict which URLs the model can fetch using allowed_domains and blocked_domains. Native provider fetch capabilities vary, so choose one of these engines if you need the parameters to be respected across models.

Use max_content_tokens to cap how much content the model receives (useful for large pages that would eat your context window):

Migrating From the Web Search Plugin

Until now, models could only search through the web search plugin(opens in new tab), which ran exactly one search per request regardless of what the model actually needed. The model had no say in when to search, what to search for, or whether to search at all.

To migrate, replace plugins with tools in your request body:

Before (plugin):

After (server tool):

Server tools let the model decide when and how often to search. One caveat: server tools require a model that supports tool calling. If your current model doesn't support tools, you'll need to switch to one that does or keep using the plugin.

We've created a migration guide(opens in new tab) with full details.